LLMs and Teaching

Another post-semester reflection and summary of student data

Monday this week was the last day of instruction at my university, and the sigh of relief from both students and faculty was audible to people miles away. I deeply enjoy my job, and being a professor is a privilege I cherish all the more because of my working class background. But I can’t lie. The three best parts of being an academic are June, July, and August. Part of May is, too, but the phrase isn’t as pithy if I have to clarify when exactly the spring semester is fully in my wake. I still have final projects to grade and other housekeeping items to take care of. Also, I’m getting a new office in a newly refurbished hall on campus, so I have to pack up my current office at some point soon.

But I didn’t write this post to bore you with the logistics of my semester wrap-up. Rather, I hope to regale you with my end-of-semester reflections on the evergreen topic of artificial intelligence (AI) in higher education.

I should remind you, and let any new readers know, that I have disdain for the term “artificial intelligence,” which has become more of a marketing buzzword than a name for a particular tool. I prefer “pattern engine”, which doesn’t lend itself to anthropomorphism, or, to help avoid confusing others, “LLMs” which is short for “large language models.” When people talk about “AI” in higher education, they really mean pattern engines or LLMs more narrowly.

As my semester comes to a close, I wanted to reflect on two things. The first is the state of LLM use among students at my institution. Every semester, my department fields a student survey, which for the past several waves includes a suite of questions about LLM use by students and their attitudes about these tools. Looking at this data helps me to better calibrate my own perceptions of LLM use among my students and avoid generalizing too much from my personal experience.

The second is a post mortem of my own attempts to ensure students are learning something in my classes rather than off-loading their learning to LLMs. I tried something in the fall semester that failed, and another something this semester that worked better. For any other educators out there, I thought it’d be helpful to share what I learned, or solicit feedback if you have better ideas than what I tried.

Without further ado, let me show you what the data says.

What the data says

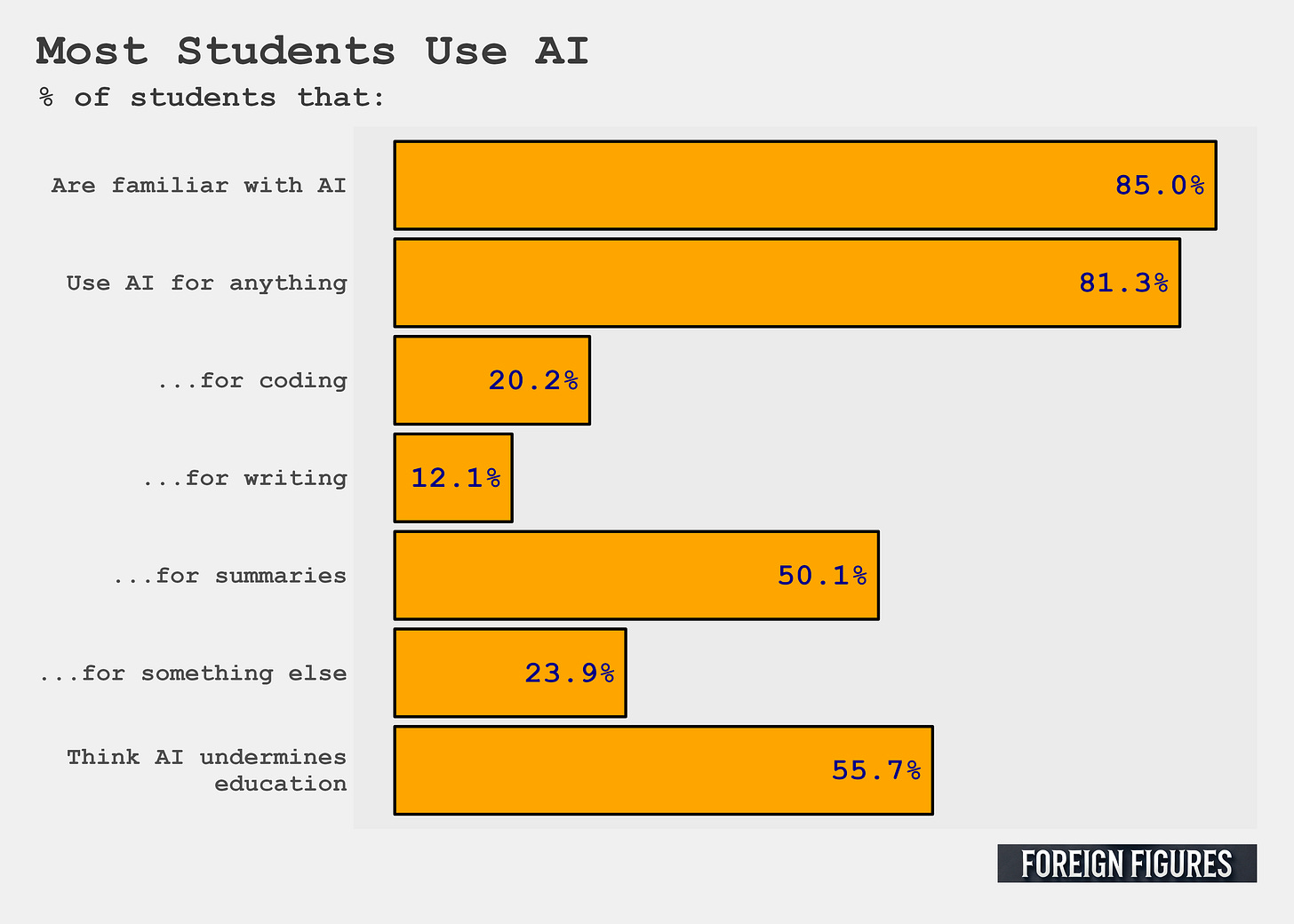

First of all, and no surprise here, the vast majority of students are familiar with and use “AI.” 81% of students said they used it for help with at least some coursework, which aligns with the figure I got from last semester’s student survey. By far, the most common way students use AI is to summarize their readings. 50% said they did this. Few reported using AI for writing or coding, but writing and coding assignments are rarer than reading assignments, which drives those numbers down.

The second finding I want to highlight is that, even though a majority of students use AI, almost 56% think AI “undermines education.” This paradox between use and attitudes is something I found in previous waves of the student survey.

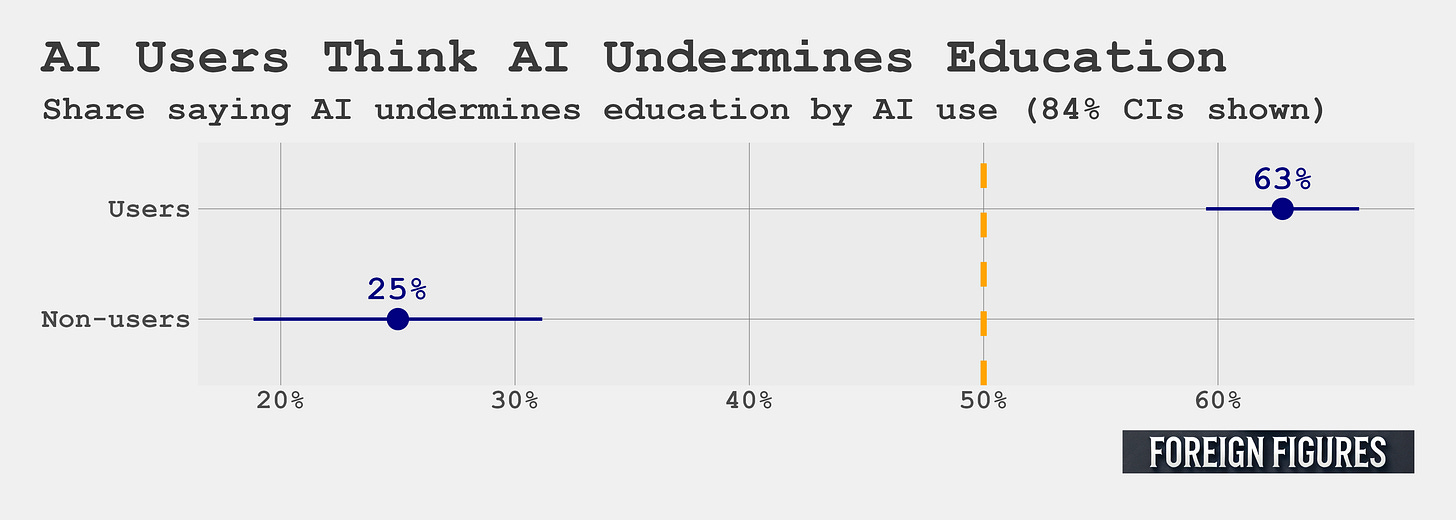

What’s most remarkable about it is that, as you can see in the second figure below, using AI is strongly correlated with negative attitudes about AI’s impact on education. Among AI users, 63% agreed that AI undermines the value of education. Among non-users, 25% agreed. That difference is both statistically significant and chasmic.

I need to dig into the data a bit more to learn why this inverse relationship between use and attitudes exists. That seems like a good idea for a future post, so I’ll hold off for now. At this point, I’ll just say three things.

First, this relationship is an interesting puzzle. As a social scientist, I love counter-intuitive findings like this.

Second, if using AI does actually contribute to a better understanding of how it can undermine the value of an education, I have some hope for my students.

Third, in retrospect I wish this question was a bit more precise about what undermining education means. The exact wording of this question is “using AI in coursework undermines the value of a college education,” and students are asked to rate their level of agreement or disagreement with this statement. The statement itself is broad and open to interpretation. It also could invite some confusion. What if AI use is part of an assignment? What if AI use just means doing background research to find sources for writing a paper? What if AI use means looking up a solution to troubleshoot some buggy code? I think some forms of AI use absolutely undermine the value of a college education, but using it as a glorified search engine or troubleshooting tool? Not so much.

Overall, the main takeaway from the data is that supermajorities of students use these tools, but many are worried about the impact on their education. That’s a clear sign to me that many students are looking for help navigating this brave new LLM-riddled world that was foisted upon them, without their consent or input.

Speaking of which, what am I doing in the classroom to help?

Pedagogical successes and failures

My approach to guiding students can sound to some like I fall on the Luddite end of the LLM-use spectrum, but as I’ve written before, I think my approach is justified. Most of the research I’m aware of demonstrates clear harms posed by LLMs on educational outcomes and only modest, uncertain benefits.

I therefore try to discourage LLM use in my classes, which squares with the, on average, aid-undermines-education sentiment of the typical student at my institution. Your welcome, students!

But, ya know, students still use LLMs, so there’s that.

I haven’t quite ironed out all the wrinkles in my approach, which I expect will be an ongoing problem for years to come. So far I’ve run two “grand experiments” to tamp down on LLM use. One I tried last semester, which failed. Another I tried this semester, which worked better but still needs some refinement.

I guess I should say that my goal isn’t to totally discourage LLM use. I think I’m coming around to the opinion that I don’t care if students use it or not, by which I mean, my objective isn’t to minimize or eliminate LLM use. My objective, rather, is that students are learning and taking ownership of their educational journey. I think you can easily use LLMs to off-load the kinds of cognitive friction that produce growth and learning, but you can also use LLMs to improve your learning. It’s all about how you use the tool and yada yada... However, I think the temptation to off-load is too great for a group of people without fully formed prefrontal cortices, so I’m clear with my students from day 1 that they should strive to do their own work. They deserve a fighting chance at developing mastery. Ultimately, though, the assessments I use in my courses are meant measure whether students are mastering the things I’m teaching them. I don’t bother checking if they use LLMs or not, and I don’t directly penalize LLM use when I see it.

My approach last semester was randomized “audits.” I teach a data-centric undergraduate political science curriculum befitting a program that calls itself Data for Political Research. I therefore teach a lot of statistical programming alongside substantive issues and theories in political science. LLMs are really good at producing code, and this is where I tend to see the most blatant off-loading of cognitive burden. As a result, grades for weekly assignments for my courses, which are coding intensive, started creeping up and submissions started to look too uniformly good. So last semester I instituted randomized audits of student submissions where I’d randomly select a few students to come to my office and replicate a portion of the assignment in front of me to check whether they were capable of producing the work they originally submitted. My intention was to provide an incentive for students to understand the work they were producing, whether they used LLMs or not.

The audits failed, and for predictable reasons. I guess hind-sight is 20/20, so I’ll grant myself a bit of grace.

They failed in four ways:

The first is that they didn’t actually produce an appreciable change in student willingness to do their own work. I still saw plenty of LLM generated submissions leaving me, still, without a way to verify what students actually understand.

The second is that the audit created performance anxiety, even for students that I knew for certain were actually trying in the class and doing their own work. When you teach small classes at a small liberal arts college, you get to know your students, and you quickly develop a sense for who’s trying and who isn’t. Some of the former failed audits, and it was clear from the start the problem was anxiety about doing work in front of the professor. This isn’t quite what I had in mind.

The third problem is that this approach, to work well, required me to be really on top of grading. The audits would come the week after an assignment was due and grades and feedback on submissions had been provided. That way students could enter the audit with some comments from me about the quality of their work. The audit was, in some ways, an opportunity for students to improve on major mistakes they made in their original submission. All good stuff, but to put it indelicately, I suck at timely grading. As any academic can attest, there’s just a lot of stuff to get done each week. When I’m overwhelmed, grading is my last priority. It then starts to pile up. The growing pile creates anxiety, which then leads to procrastination. It’s a vicious cycle. For audits to run on schedule, I needed to magically fix this problem and I failed to find that magic.

Finally, the audits were time consuming. As I said, I have a lot going on already. The audits made everything worse.

For all these reasons I scraped the audits this semester, and I wouldn’t recommend them to others. Any educators out there are welcome to give them a try, and maybe you’ll have more success. I’m done with them.

This semester I tried a new, two-pronged approach. First, I assigned weekly practice sets which I graded purely on participation. Second, those practice sets were meant to help students practice for a weekly in-person assessment, which was a glorified pencil-and-paper quiz. (Yes, you can go analogue for coding assignments!).

This approach worked better, and I’ve jotted some observations below:

First of all, where before I witnessed suspiciously high uniformity and quality of student grades, I saw far more diversity in responses and variation in overall performance.

I also believe I got a better glimpse of what students were absorbing in class. You can still cheat on a pencil-and-paper quiz, but it’s a lot harder than cheating on a take-home assignment where a helpful LLM is at your beck and call, ready to do the hard work for you. I have small classes, so it was easy for me to keep tabs on the room. If a student still found a way to cheat, I feel weirdly awed by their level of commitment to academic dishonesty.

Another pro is that the least invested students performed the worst on the in-person assessments. Before, many of these same students would have no issue passing the weekly assignments, often with flying colors. That was a red flag. It also was a bad incentive structure. Students should be rewarded for paying attention and investing time in class.

Finally, grading in-person pencil-and-paper quizzes is a lot better from a quality-of-life perspective on my part compared to grading submissions on an online portal. To grade, I put my laptop away, I grabbed a red pen, and I worked through a physical stack of quizzes. I still hate grading, but I hate this style of grading a little less.

I still need to make some fixes to this approach to weekly assessments. The main problem, as always, is my congenital slowness in grading. I fell behind yet again this semester. But, I have ideas I want to try next semester to solve this problem. It’ll probably be something like self- or peer-grading, or simply going over an answer key in class. Easy things to implement, that in retrospect I should have thought through ahead of time.

These are all minor changes. The general idea of in-person, analogue assessments is here to stay for me. I think they create the right incentives for students to make sure they understand their code, and other substantive issues and theories covered in class, whether students use LLMs or not,

I’ve thought about other solutions, like AI detectors, or history tracking in something like a Google Doc. I don’t trust the detectors, and there’s something annoyingly circular about using LLMs to deter their use. History tracking just sounds like more work when I’m already struggling to keep up with the amount of grading on my plate. Adding to the mix a high-speed viewing of a student writing out code and writing up an analysis, if you’ll forgive the profanity, sounds like hell.

Beyond the weekly pencil-and-paper assessments, I still assign 2-3 team-based, applied research papers for my classes. While the temptation for LLM use is high, amazingly, blatant LLM use is less of an issue in these assignments than you’d think, and for that I’m grateful to be at an institution that has a higher than average number of motivated students. The papers where LLM use is painfully obvious tend to be not so good anyway, and students, I’ve found, also tend to rat members of their group out who do use LLMs. Maybe that’s a problem, but I’ll take any self-correction from students I can get. I mostly grade these assignments the same way I always did. So far, that’s working out fine.

Wrapping up

I’ll end this thing by just repeating two things that keep showing up in the data. Nearly all students use LLMs for help with coursework, but most also worry about how these tools undermine the value of a college education.

As an instructor, I feel like a part of my job now is figuring out how to ensure students actually understand what I teach them, which means I need to create incentives that favor accepting cognitive friction rather than rewarding cognitive off-loading. For me, that means going analogue with weekly pencil-and-paper quizzes, while still holding onto team-based applied research projects that students work on outside of class (and inside class, too). My objective isn’t to discourage or encourage LLM use. That’s completely orthogonal to my goals. I want students to be challenged by my classes, learn something, and develop some curiosity about the political world and have the motivation and skills to collect data and other forms of evidence to help them find their own answers to questions. If someone can show me clear and replicable evidence that LLMs offer clear benefits to the learning process, I’ll make their adoption a more explicit and common part of my classes. Until then, I do my best with more traditional tools.

Code for the analysis in this post can be found here.

Thanks for reading or listening! You can support Foreign Figures by liking, sharing, buying me a coffee, or becoming a free or paid subscriber.